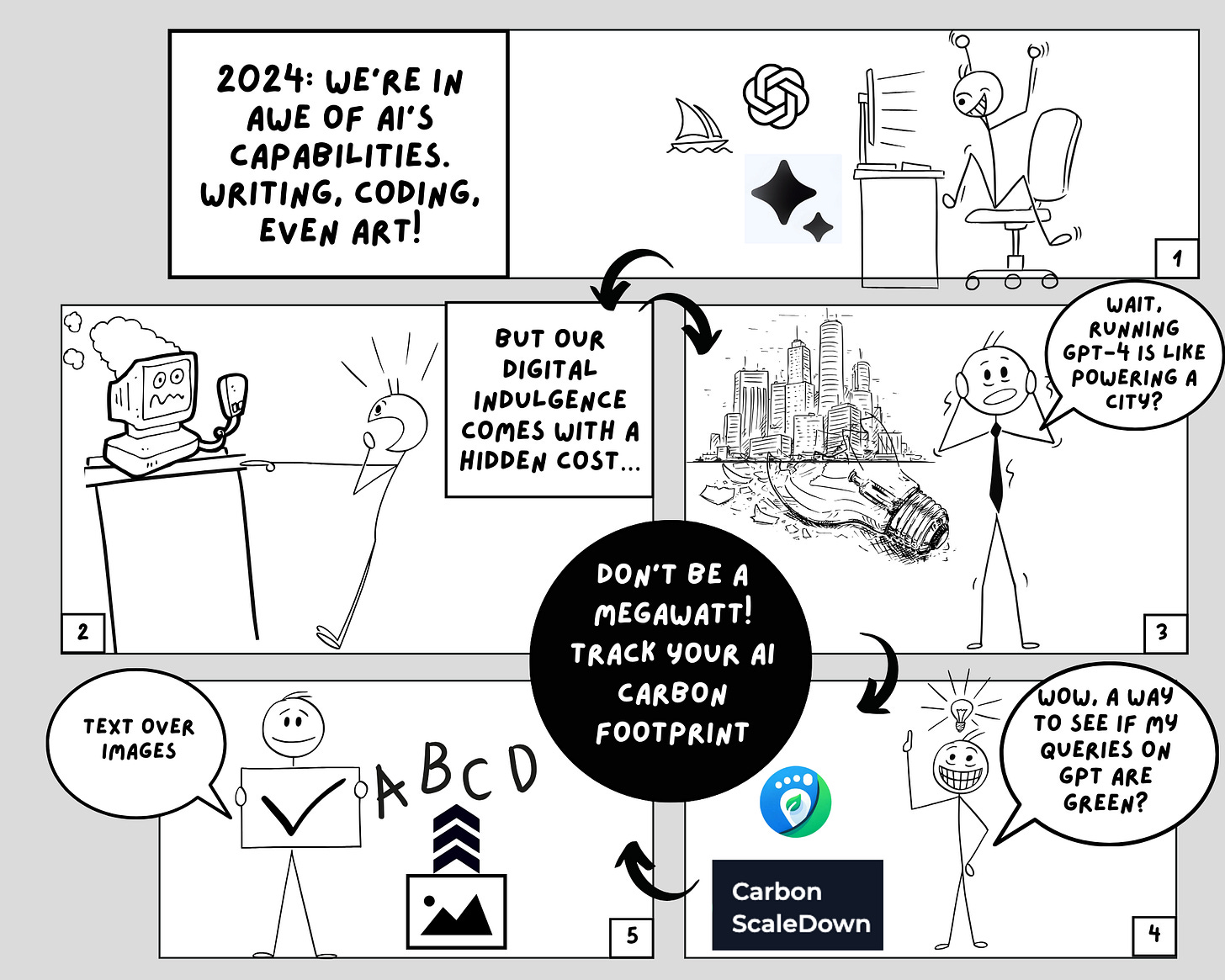

2024: The Year We Should Start Caring about the Carbon Footprint of LLMs

The New Metric for Responsible Digital Citizens

Hey there!

We've taken a step back to see what's behind the scenes. While 2023 marked an unprecedented surge in the popularity of Large Language Models, led by the phenomenal success of ChatGPT, beneath this explosion, lurks a significant environmental concern: the carbon footprint of these models.

Our last issue highlighted that LLM training relies on massive data centers and thousands of GPUs. These facilities consume enormous amounts of electricity, equivalent to lighting up a small country for several hours, resulting in substantial carbon emissions. While the industry has made strides in acknowledging and addressing the carbon emissions from training LLMs, a critical aspect often goes unnoticed: the emissions from running apps powered by these models.

Carbon ScaleDown: Rethinking the Environmental Impact of our AI Conversations

ChatGPT, for instance, has been serving millions of daily active users for over a year, far exceeding its training duration. The implication is clear: the environmental impact of LLMs extends beyond development into their everyday use.

This is why we have some exciting news to share with you! We've created Carbon ScaleDown, a Chrome Extension that will help you measure and track the carbon footprint of your ChatGPT chats! This extension calculates emissions based on the generated tokens and images, offering users a tangible understanding of their digital environmental impact.

We wanted to give you a simple tool to help you be more conscious about your use of LLM models. We hope you'll love it!

In our first article, we talk more about our motivation behind building this extension and briefly describe how it works. We'll also take a closer look at some research papers that show us how much carbon is produced by different ML models and tasks. We'll see how the complex models we use today produce orders of magnitude more carbon emissions than the biggest models from just a few years ago. Read more below!

Calculating the Carbon Emissions of ChatGPT

While researchers have begun examining the factors that contribute to carbon emissions, focusing on specific tasks and models, research on the emissions resulting from model inference is still in its early stages.

Meta indicated that approximately 70% of their computational resources are allocated to model inference. This starkly contrasts the 20% for training and 10% for experimentation. They also found that for many models, the carbon footprint of running them surpasses the emissions from both training and online training.

In our second blog, we delve deeper into how we are calculating the carbon emissions of ChatGPT in our Carbon ScaleDown chrome extension. Our analysis considers various factors, including the model's size, the efficiency of the underlying hardware, and the energy sources powering the data centers.

We also show how a simple chatGPT query’s energy consumption is roughly 1000 times more than a simple Google search, highlighting the significant environmental impact of frequent LLM usage. Read our blog below to find out more!

MLOps and ML Pulse Check

1. Model Merging:

Model merging is all the rage now. It is a technique to combine two or more LLMs to create a single model. It is also cost-effective as it does not require the use of a GPU. Surprisingly, model merging has proven to be highly effective and has resulted in many state-of-the-art models listed on the Open LLM Leaderboard, including one of our own! One of the most popular model merging frameworks is mergekit, which currently supports many merging techniques like Linear, TIES, SLERP, and DARE.

2. Mixture of Experts

Another technique that has gained popularity is Mixture of Experts or MoE, popularised by the release of the Mixtral model. MoE models use routing to route tokens to experts that can handle them better. Although the entire model needs to be loaded into memory, the inference latency is lower compared to models of the same size because only a few experts are executed. While the small mistal MoE model is open-sourced, you can only access the medium model via Mistral’s API.