Back from Japan with the Magic of Large Language Models & the Art of Prompt Engineering

In-depth Journey into Prompt Engineering in LLMs & Behind the Scenes of ChatGPT

Greetings!

We know it's been a while since you last heard from us. Why the silence, you ask? We've been traversing the globe, soaking in knowledge and experiences so that we can bring them back to you. Our most recent adventure took us to the enchanting Land of the Rising Sun—Japan.

Japan was truly a wonder, an intriguing blend of ancient traditions and cutting-edge technology, much like Japan's blend of the old and new, Large Language Models (LLMs) intersect with established linguistic principles and pioneering ML developments.

As promised, we are back with some riveting tales (or blogs, as we like to call them) to feed your intellectual curiosity and continue our exploration of the ever-evolving world of LLMs.

Blog 1: The Art of Prompt Engineering

In our previous newsletter, we covered Introduction to LLMs and Finetuning. Now that you've got a handle on the basics let's take a step forward and explore the world of Prompt Engineering in the realm of LLMs. Much like teaching a bonsai tree to grow in a specific shape, prompt engineering involves guiding our model in responding to certain inputs. Our first blog takes you on an elaborate journey of understanding when and why to use Prompt Engineering, its types, and how to decide on the right approach. It's an art, a science, and a lot of fun!

Blog 2: The Magic Sauce Behind ChatGPT

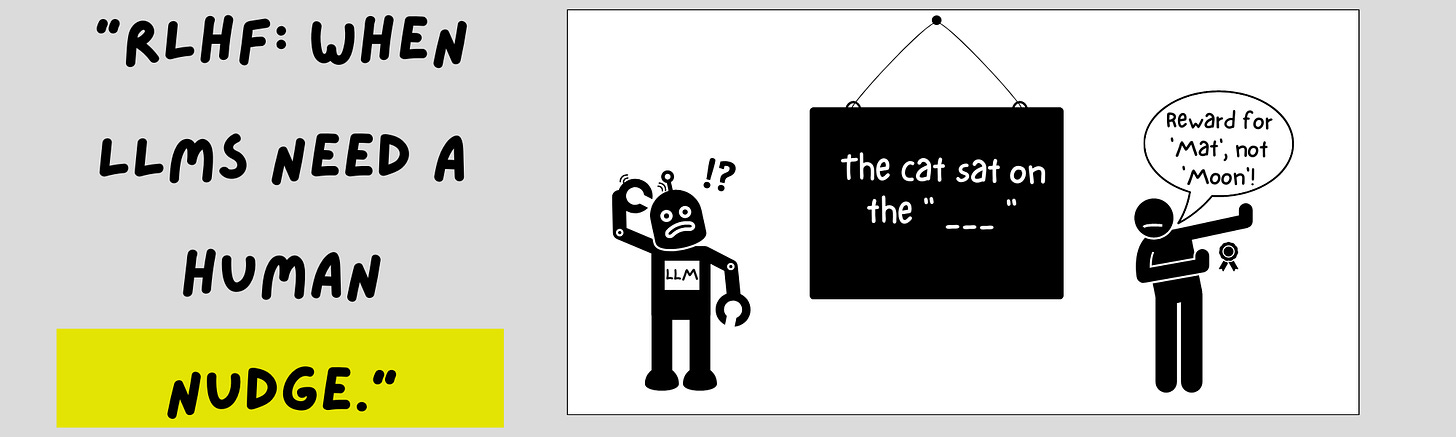

Have you ever wondered about the 'magic sauce' that makes ChatGPT tick? How does it understand your queries, craft coherent responses, and sometimes even throw in a witty comeback? Get ready to navigate through the history of LLMs, the hows and why's, the potential, and the challenges. You'll learn about the nuances of Reinforcement Learning, Human Feedback(RLHF), and Reward Modelling. We will also try our hand at RLHF with Hugging Face and GPT-2.

MLOps and ML Pulse Check

Anthropic announces Claude 2 and Constitutional AI: Anthropic has recently made its chatbot, Claude 2, accessible to the public. Anthropic also announced "Constitutional AI", their approach to ensuring the safety of their generated content. It works by training the model on a set of principles for decision-making about the produced text. The principles were taken from documents including the 1948 UN declaration and Apple’s terms of service, which cover modern issues such as data privacy and impersonation. Claude 2 showcases impressive performance, scoring 76.5% on the Bar exam's multiple-choice section and surpassing the 90th percentile on the GRE reading and writing tests. However, my biggest improvement is the expanded context length of 100k tokens. This means that you can submit a whole book in a single prompt! This will be useful when building chat applications as the model can now get long-term contextual information. Despite the upgrades, Anthropic maintains its API pricing the same as its previous model, Clause 1.3.

GPT4 Function Calling and API Price Drop: Speaking of API pricing, about a month ago, OpenAI dropped the prices of their APIs even further! They dropped the pricing of their ada-002 model by 75% and gpt-3.5-turbo by 25%! However, another announcement that went under the radar was how you can interact with gpt-4 and gpt-3.5 using function calling. So now, instead of writing text like “Create a SQL Query to find out many new subscribers did I get this month?” you can write a function in the form “create_sql_query(text)”, and it should output a query for you!

So, join us on this enlightening journey and let's embrace the knowledge and fun that LLMs bring. Thank you for tuning in and sticking with us on this ride. We're thrilled to be back in your inbox and hope to bring you even more engaging content in the future.